SiLU / Swish

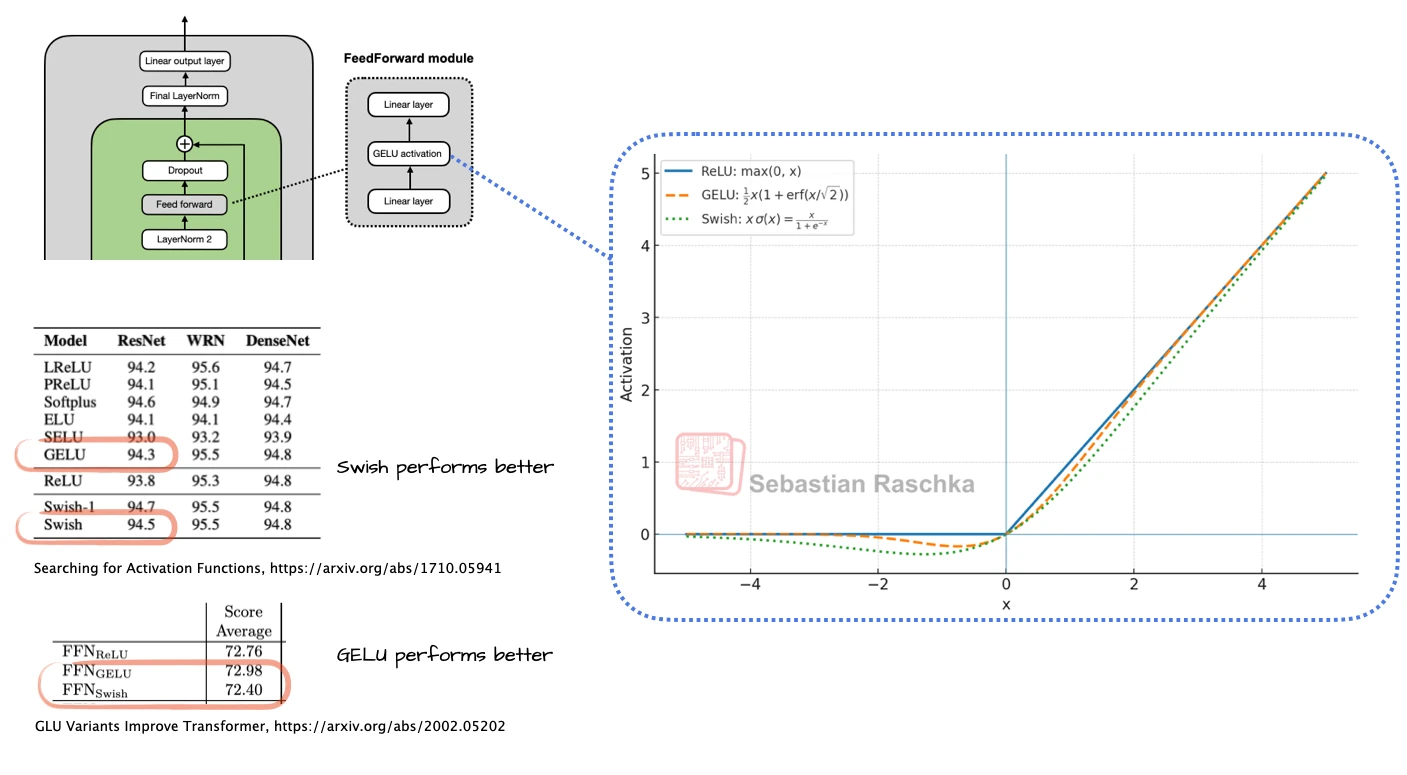

SiLU is the activation function that quietly took over modern decoder feed-forward blocks, especially once SwiGLU became the default MLP design.

In practice, the more important shift was not just GELU to SiLU, but ordinary two-layer MLPs to gated feed-forward blocks like SwiGLU. That is why SiLU matters in LLM architecture discussions: it is part of the feed-forward recipe that most strong current models now share.

Why It Exists

Activation functions used to be a much bigger open question than they are now. By the time decoder LLMs became dominant, the real decision was less about whether activations matter and more about which smooth activation gives a good tradeoff between optimization behavior and implementation simplicity. SiLU ended up being a very workable choice.

It is computationally simpler than GELU, and in practice the quality differences are usually not large enough to outweigh the engineering convenience.

Why SwiGLU Matters More Than SiLU Alone

In current LLMs, SiLU is often not used as a plain drop-in activation in a standard two-layer MLP. Instead, it appears inside a gated GLU-style feed-forward block, usually SwiGLU. That is the pattern you see in many architecture figures: a gate branch, an activation branch, and an elementwise product before the projection back to the model dimension.

So the practical way to think about SiLU in modern LLMs is: it is the activation inside the default gated MLP recipe, not just a free-standing choice between two scalar functions.

Why Some Models Still Use GELU

This is not a hard rule. Some important model families, like Gemma, still use GELU. That is a useful reminder that SiLU is one of the cleaner defaults, not a universal law of architecture design.

In other words, if a modern architecture figure says FeedForward (SwiGLU), SiLU is part of the hidden recipe. If it says GELU, that model simply made a different tradeoff and probably kept the more classical MLP flavor.

How To Read It In The Gallery

On the gallery page, SiLU usually appears indirectly through labels like SwiGLU or through models that explicitly mention a shared SwiGLU path. It is not as visually distinctive as MLA or MoE, but it is part of the common background recipe that makes many recent decoders look so similar in their feed-forward blocks.