Hello, I'm Sebastian Raschka, PhD

I am an LLM Research Engineer with over a decade of experience in artificial intelligence. My work bridges academia and industry, including roles as senior engineer at Lightning AI and as a statistics professor at the University of Wisconsin-Madison.

I am also the author of Build a Large Language Model (From Scratch).

My expertise lies in LLM research and the development of high-performance AI systems, with a deep focus on practical, code-driven implementations. (For my most up-to-date CV details, please visit my LinkedIn profile.)

Recent Notes and Blog Entries

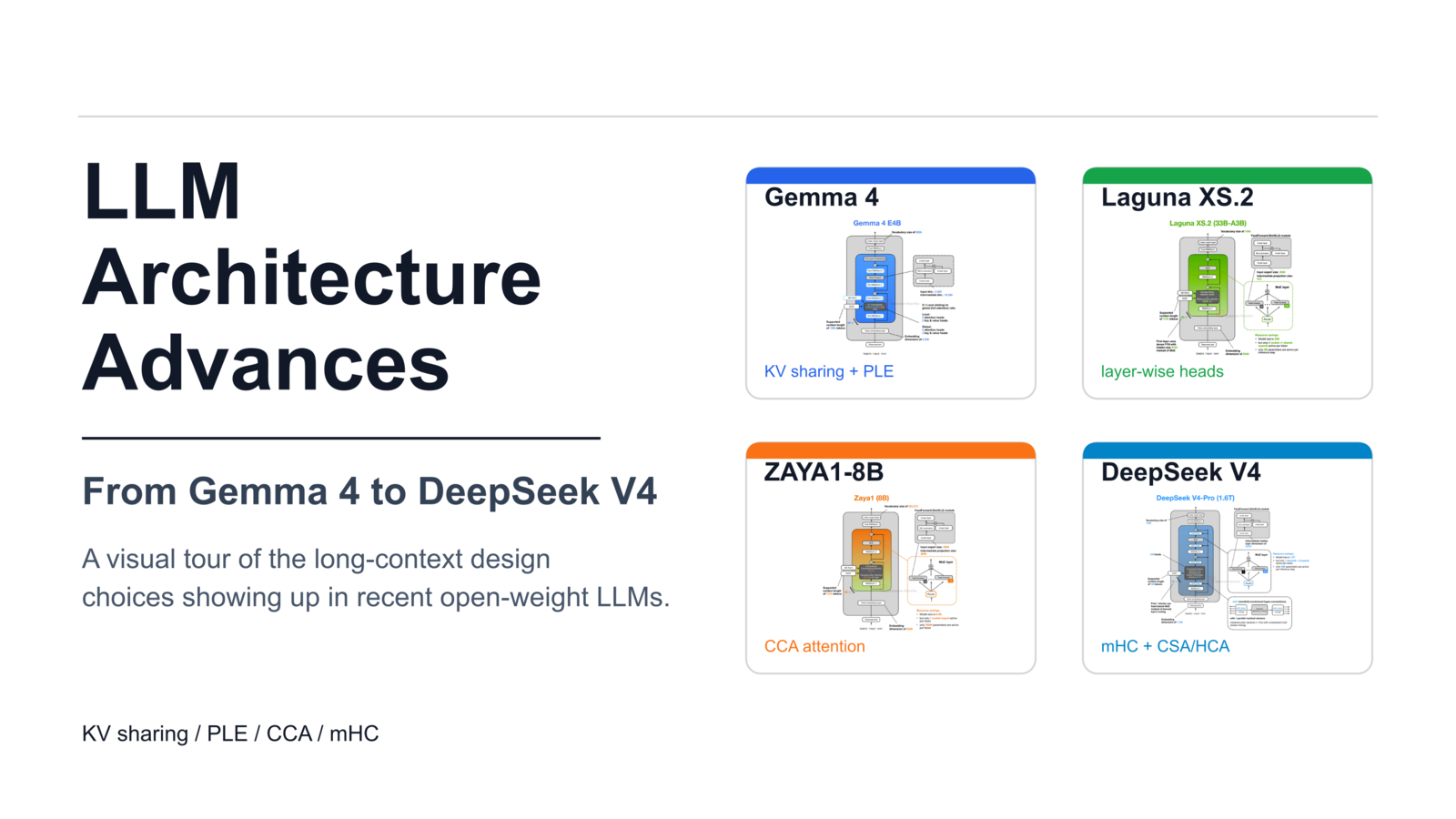

Recent Developments in LLM Architectures: KV Sharing, mHC, and Compressed Attention

May 16, 2026

From Gemma 4 to DeepSeek V4, How New Open-Weight LLMs Are Reducing Long-Context Costs

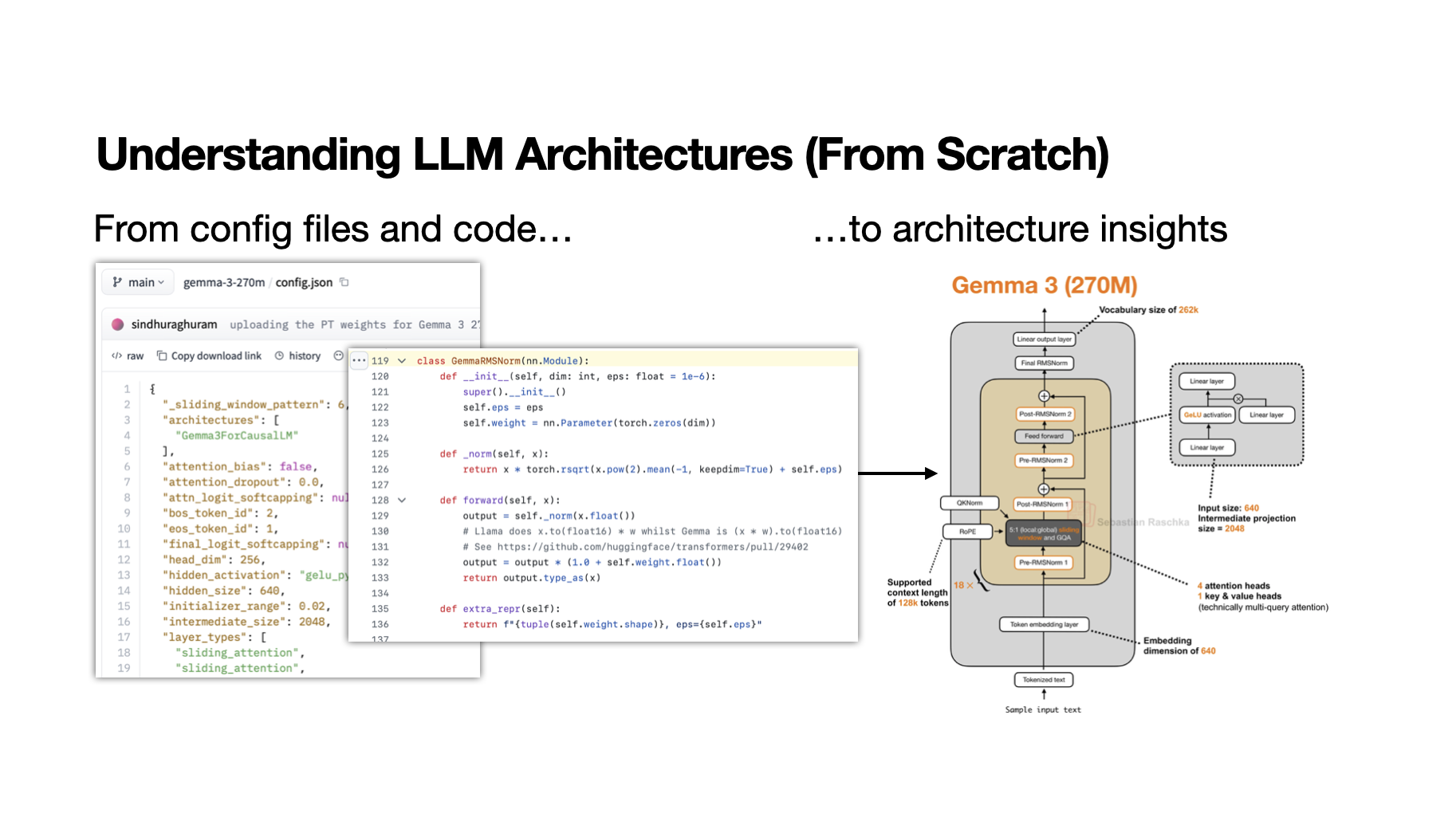

My Workflow for Understanding LLM Architectures

Apr 18, 2026

A learning-oriented workflow for understanding new open-weight model releases

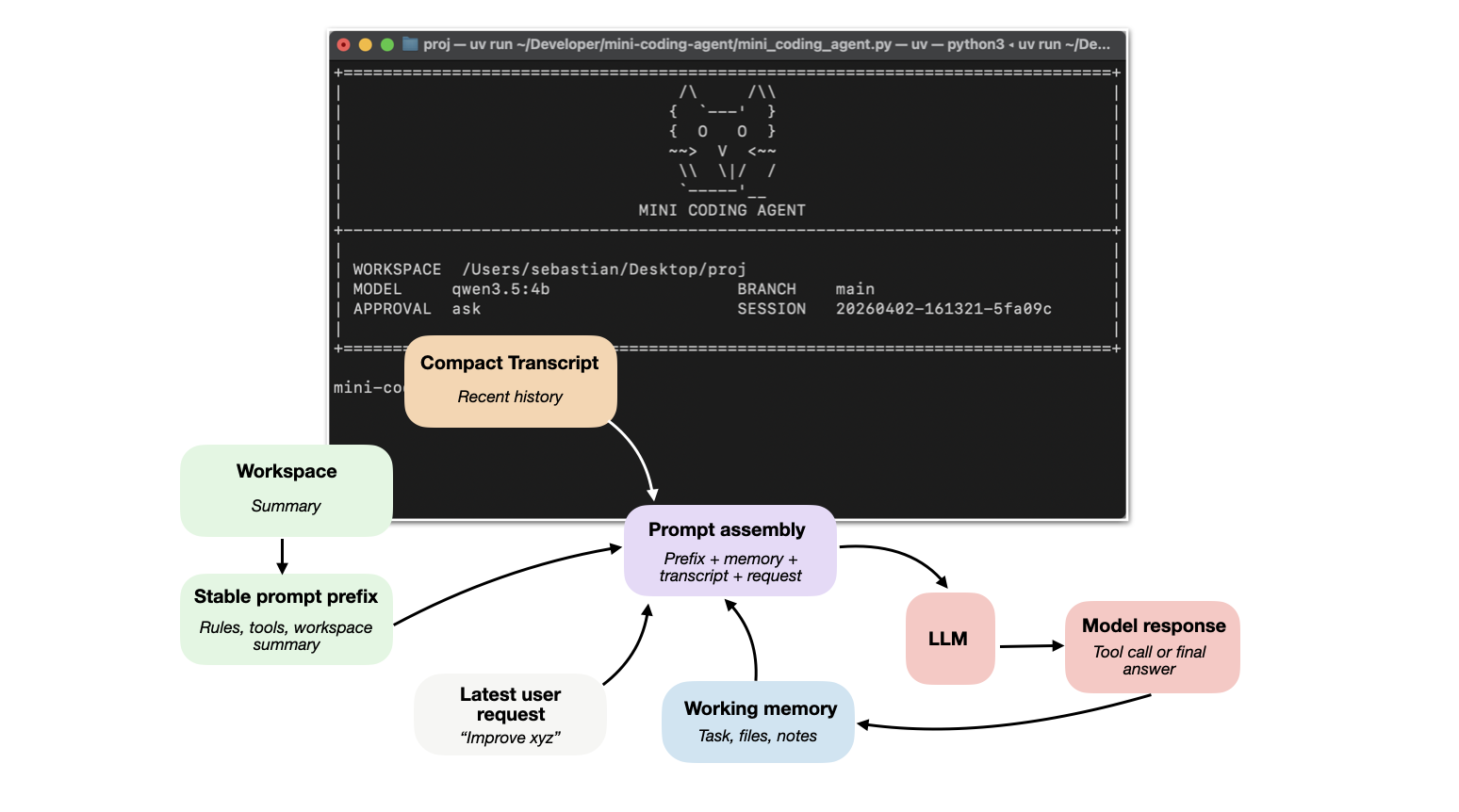

Apr 4, 2026

How coding agents use tools, memory, and repo context to make LLMs work better in practice

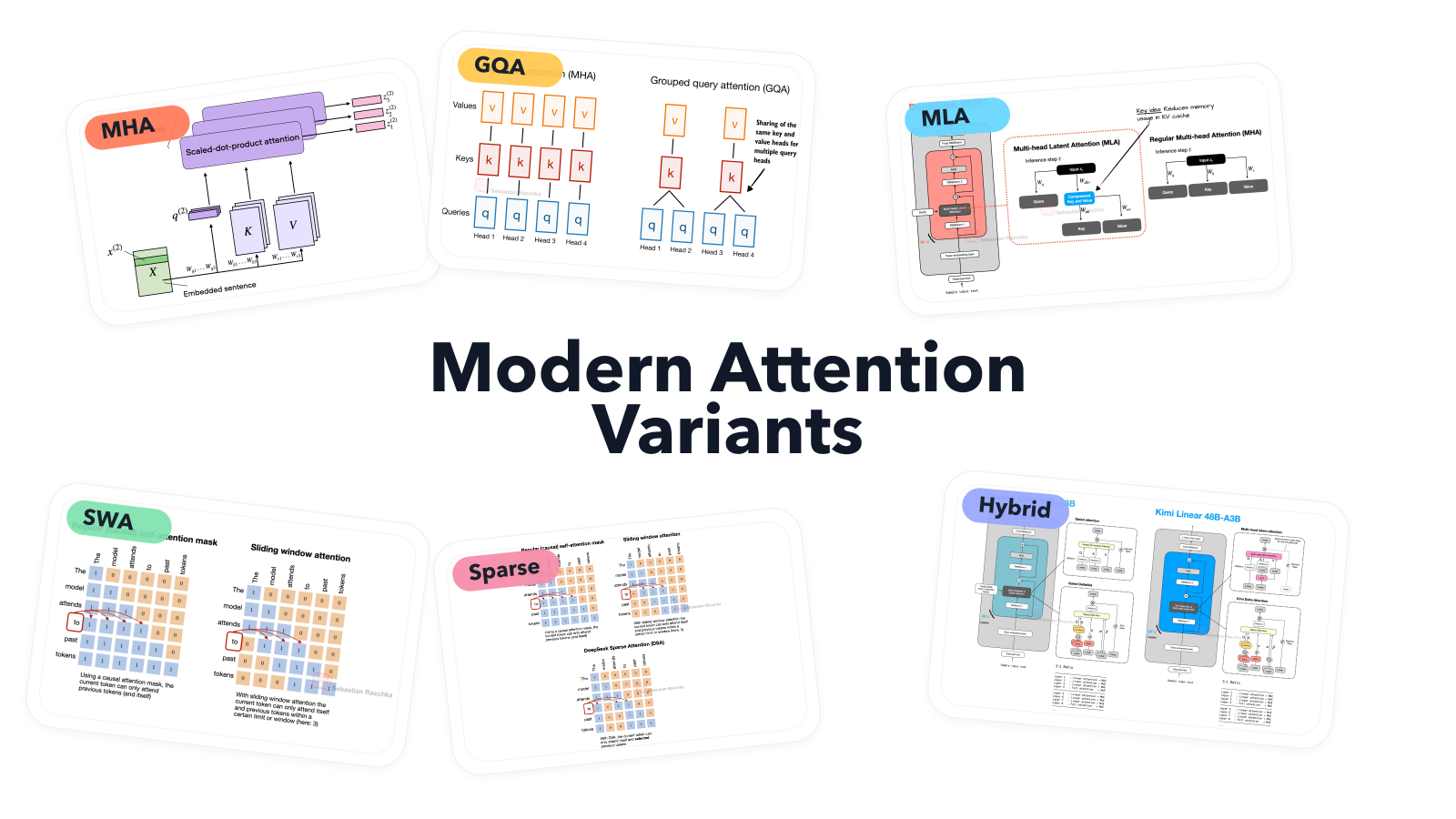

A Visual Guide to Attention Variants in Modern LLMs

Mar 22, 2026

From MHA and GQA to MLA, sparse attention, and hybrid architectures

Mar 14, 2026

Visual gallery of LLM architecture variants: attention mechanisms, positional encodings, MoE, and more — with comparison figures and compact reference sheets.