Machine Learning FAQ

What is SwiGLU, and why is it common in modern LLM feed-forward layers?

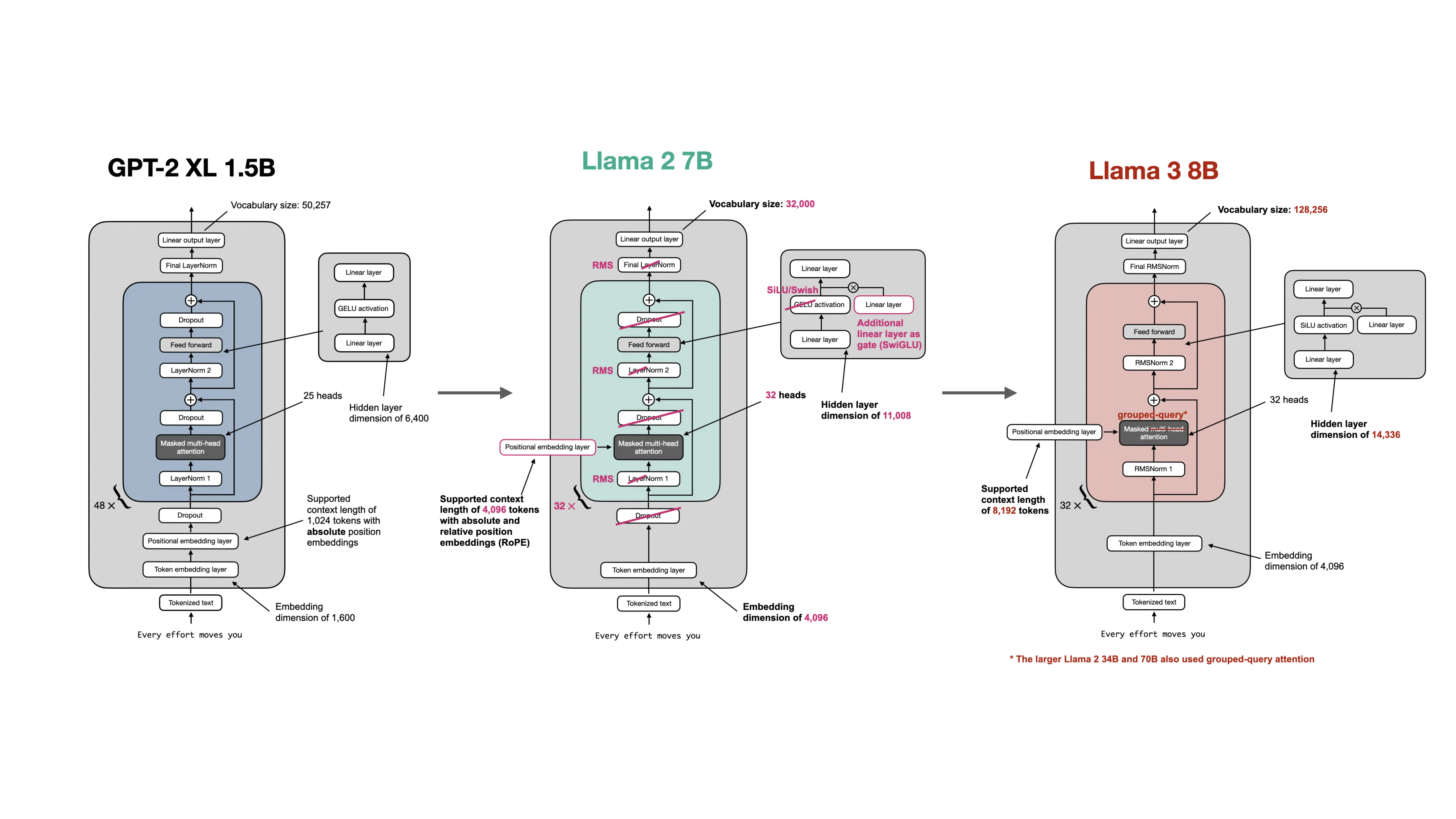

SwiGLU is a gated feed-forward layer used in many modern LLMs. It replaces the older plain MLP pattern often seen in GPT-style models and is common because it gives the model a more expressive way to control which features get amplified or suppressed.

In a transformer block, the feed-forward sublayer is a very big deal. It often contains a large share of the model’s parameters, so even a modest improvement there matters.

The basic intuition behind SwiGLU is:

- one projection carries candidate features

- another projection produces a gate

- a SiLU or Swish-style nonlinearity makes that gate smooth and input-dependent

So instead of pushing every hidden feature through the same fixed nonlinearity, the model can modulate which parts of the signal should pass through strongly.

That extra flexibility is the main reason SwiGLU became popular. Compared with a simpler GELU MLP, it often gives a better quality-efficiency tradeoff in large language models.

The repo’s GPT-to-Llama material treats this as one of the hallmark changes from older GPT-style stacks to more modern Llama-style stacks. The overall transformer blueprint stays the same, but the feed-forward block becomes more capable.

This is also why SwiGLU shows up beyond dense models. In MoE architectures, each expert is often itself a SwiGLU-style feed-forward module. In other words, once the community decided that gated feed-forward blocks were a strong default, that choice carried over into newer sparse architectures too.

So why is SwiGLU common in modern LLMs?

- it gives the feed-forward layer a stronger gating mechanism

- it often improves model quality for a similar compute budget

- it fits well with other Llama-style architectural refinements

In short, SwiGLU is a gated feed-forward design built around a SiLU-style gate, and it is common in modern LLMs because it usually provides a more expressive and effective feed-forward block than the older plain GELU MLP pattern.