Machine Learning FAQ

How does instruction finetuning make a base model more useful in practice?

Instruction finetuning makes a base model more useful by teaching it to interpret prompts as requests to satisfy, not just as text prefixes to continue.

A pretrained base model is often good at text completion, but that does not automatically mean it is good at being helpful. If you give it an instruction, it may continue in an odd style, ignore the requested format, or drift into a generic completion instead of producing the kind of answer a user expects.

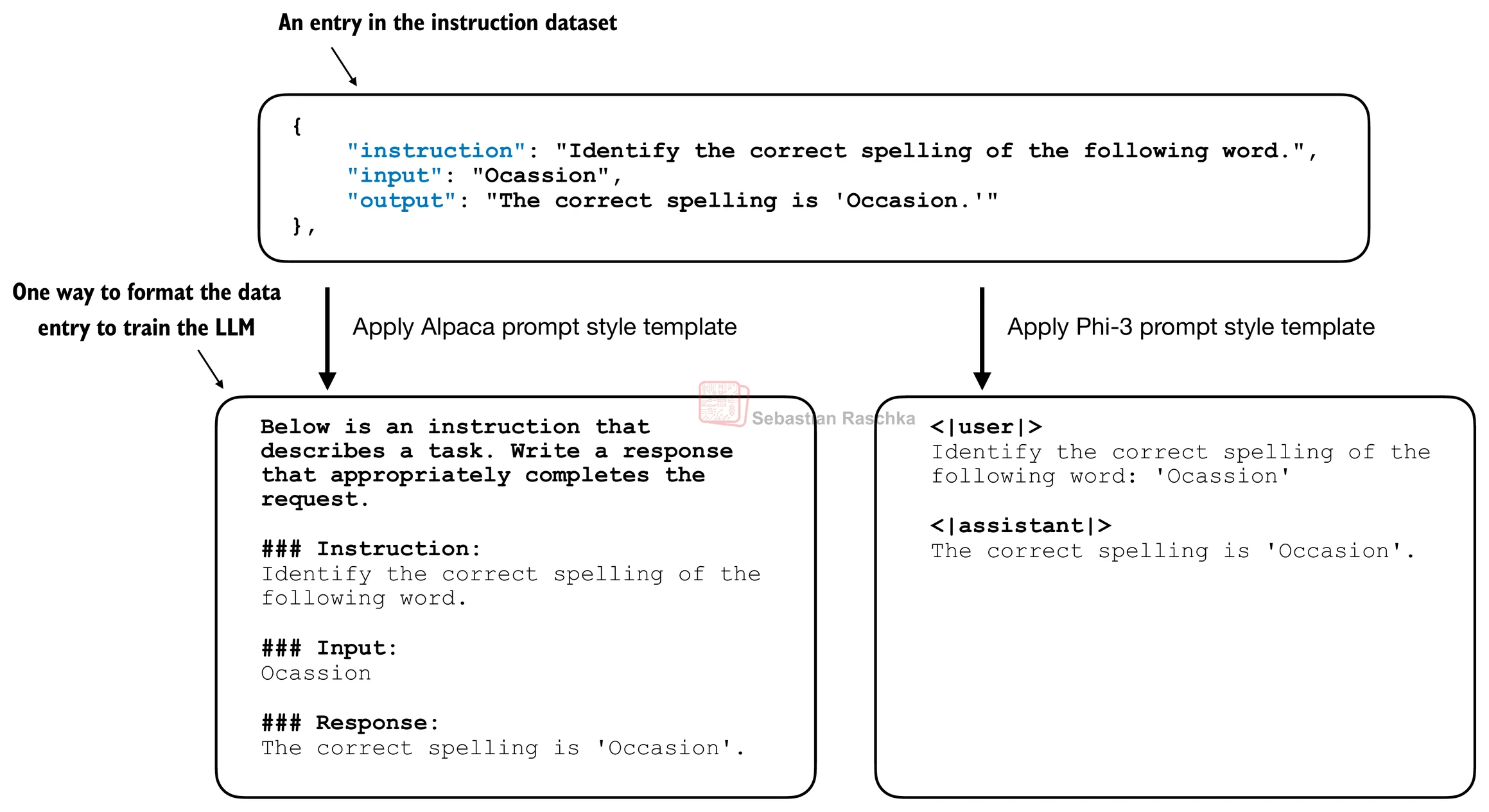

Instruction finetuning addresses this by training the model on many examples of:

- an instruction

- an optional input or context

- a desired response

This changes the model’s behavior in several practical ways.

First, it improves instruction following. The model gets many examples showing that when the prompt asks for a summary, translation, classification, rewrite, explanation, or transformation, the response should directly carry out that task.

Second, it improves response formatting. The model learns how answers are typically structured in the training dataset, including how to separate prompt and answer regions.

Third, it improves task generality. Unlike narrow classification finetuning, instruction finetuning can cover many different tasks in one dataset, which helps the model behave like a general assistant rather than a single-purpose tool.

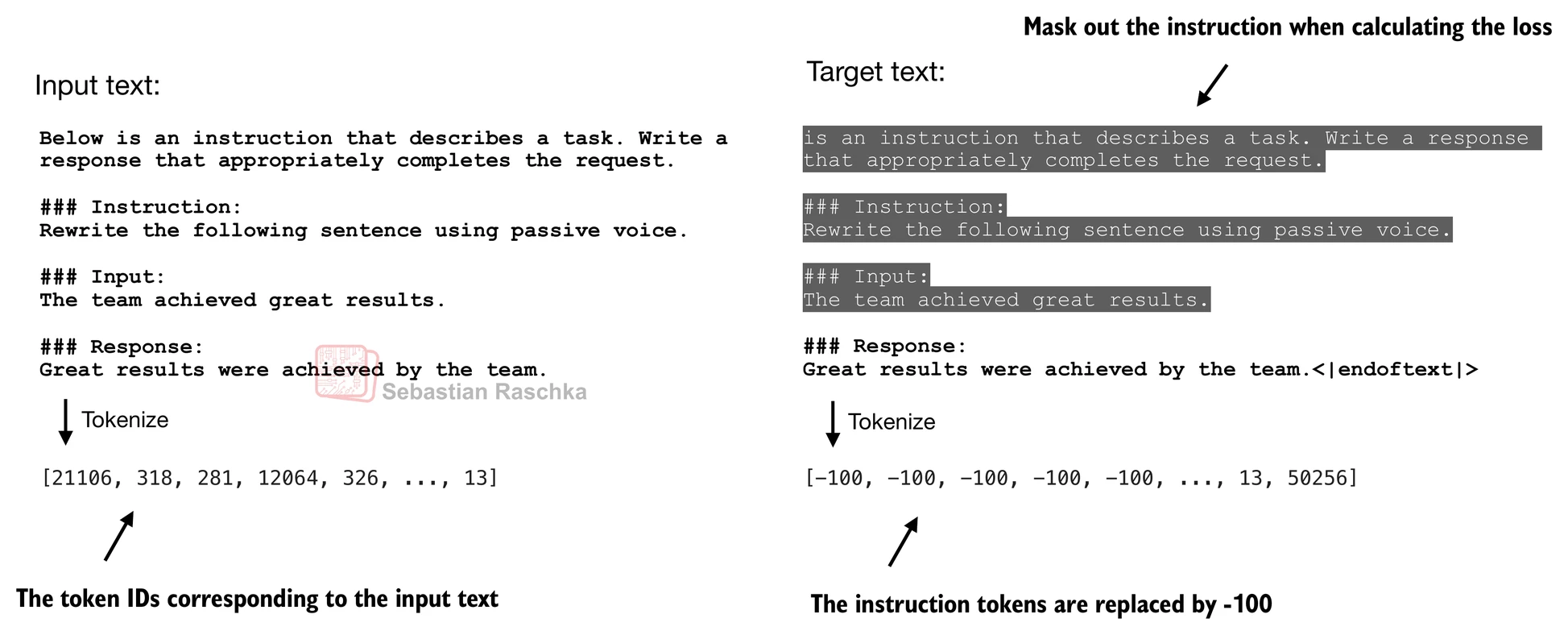

Fourth, it can improve response focus. In many instruction-tuning setups, the training loss emphasizes the response tokens instead of treating the prompt tokens as equally important targets. The chapter 7 material in the repo illustrates masking prompt tokens out of the loss so the optimization focuses on producing better answers.

The important point is that the underlying model may still have roughly the same knowledge base from pretraining. Instruction finetuning does not magically create all capabilities from scratch. Instead, it reshapes how the pretrained model uses what it already learned so that it behaves more helpfully in an interactive setting.

That is why instruct models often feel much more usable than base models even when they share the same architecture. The difference is not only what they know, but how they were trained to respond.

So, in practice, instruction finetuning makes a base model more useful by:

- making prompts work more reliably

- improving answer style and structure

- increasing consistency across tasks

- making the model behave more like an assistant and less like an unconstrained text completer

In short, instruction finetuning takes the broad capabilities learned during pretraining and aligns them with prompt-response behavior that is much more useful for real users.