Machine Learning FAQ

What are good first optimizations before moving from one GPU to multi-GPU training?

Before moving from one GPU to multi-GPU training, it usually makes sense to exhaust the simpler single-GPU optimizations first. They are easier to debug, cheaper to run, and often deliver surprisingly large gains.

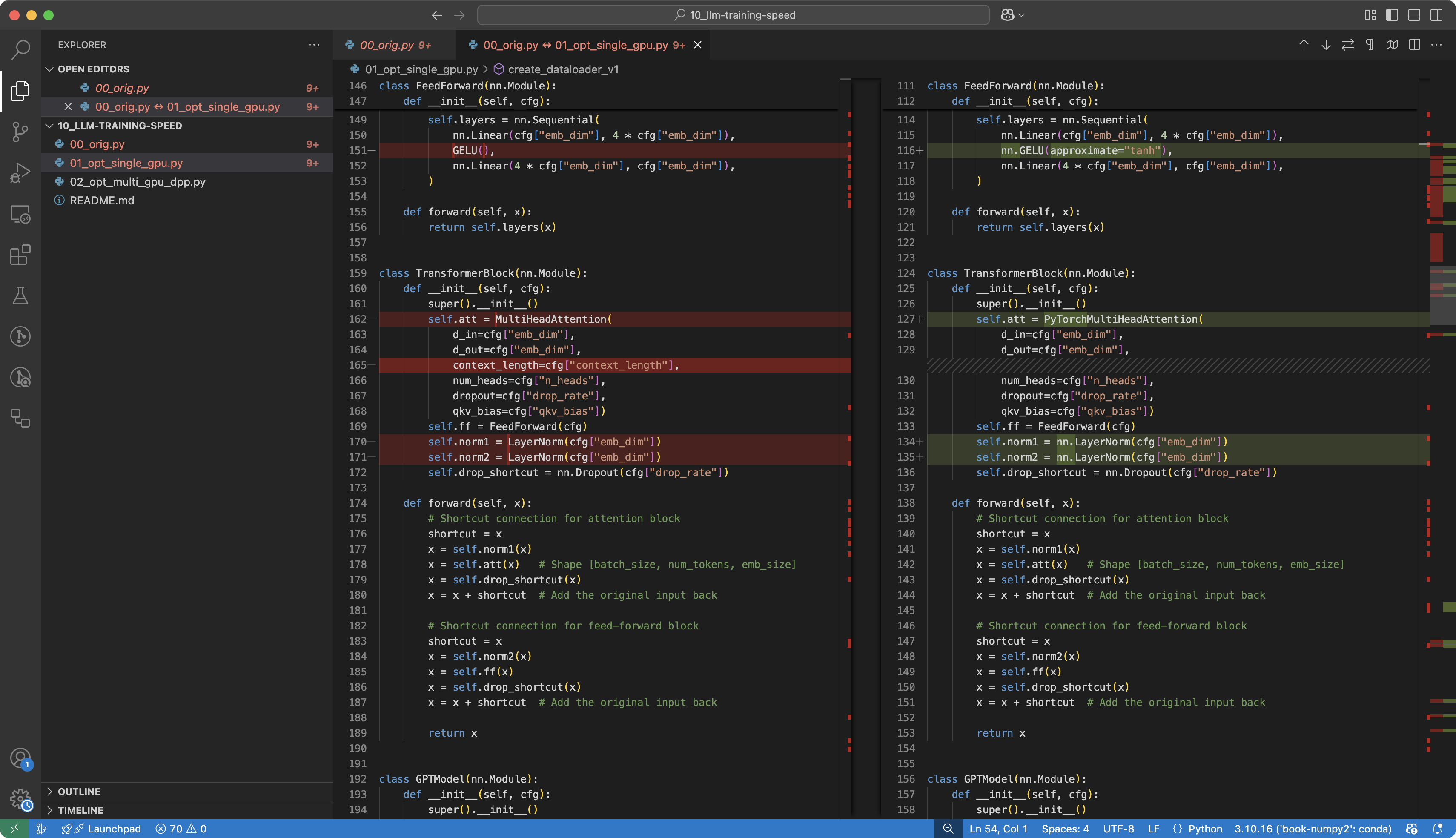

The repo’s training-speed material is a good example: a long sequence of single-GPU improvements dramatically increased throughput before distributed training entered the picture.

Good first optimizations include:

- using

bfloat16 - enabling tensor-core-friendly settings

- using fused optimizers

- enabling pinned memory in the data loader

- replacing from-scratch kernels with optimized PyTorch implementations where appropriate

- using FlashAttention

- trying

torch.compile - tuning batch size after the other optimizations are in place

These changes matter because distributed training adds a lot of complexity:

- process-group setup

- synchronization overhead

- harder debugging

- more failure modes

So if a single GPU is still leaving obvious speed or memory gains unused, multi-GPU is usually not the first thing to fix.

The practical rule is to move to multi-GPU when:

- the optimized single-GPU path is already reasonably efficient

- the model or data scale truly exceeds one GPU

- the added complexity is justified

In short, good first optimizations before multi-GPU training are mixed precision, efficient kernels, fused optimizers, better data loading, and compilation, because those changes often unlock large gains without distributed-training complexity.